INCOME SHARE AGREEMENTS

Purdue, BFF, the national conversation

“Long discussed in college policy and financing circles, income share agreements, or ISAs, are poised to become more mainstream.”

That’s from a September Wall Street Journal article. 2017 saw new pilots and the introduction of legislation in Congress, as well as the continued growth of Purdue’s Back a Boiler program, which was covered in PBS Newshour with JFI staff featured.

Better Future Forward (incubated by JFI) was founded to originate ISAs and structure ISA pilots. Launched in February 2017 with support from the Arnold Foundation and the Lumina Foundation, BFF has formed partnerships with (and funded students through) Opportunity@Work, College Possible, and the Thurgood Marshall College Fund.

Various research organizations are tracking ISAs closely:

From the American Academy of Arts and Sciences, a 112-page report with “practical and actionable recommendations to improve the undergraduate experience”: “What was once a challenge of quantity in American undergraduate education, of enrolling as many students as possible, is now a challenge of quality—of making sure that all students receive the rigorous education they need to succeed, that they are able to complete the studies they begin, and that they can do this affordably, without mortgaging the very future they seek to improve.” Link to the full report. See page 72 for mention of ISAs.

From the Miller Center at the University of Virginia (2016): “ISAs offer a novel way to inject private capital into higher education systems while striking a balance between consumer preferences and state needs for economic skill sets.” Link.

From the Aspen Institute: “ISAs are useful beyond traditional colleges and universities. As the economy changes and more jobs are automated, programs to retrain and upskill workers, such as ‘coding boot camps,’ are emerging. These programs can be expensive, making them inaccessible to lower-income workers. ISAs can reduce this financial barrier.” Link.

- In January, a research team at Stanford led by economist Raj Chetty published a groundbreaking assessment of the outcomes American colleges and universities generate for their students. Analyzing special-access IRS tax data that had never been used for this purpose, the researchers were able to identify the educational institutions that regularly churn low-income students into middle class adults. The research findings are available in a number of forms here. The New York Times produced a summary of (and interface for) the data here.

Will comments: “The promise of ISAs is that they preoccupy funders with institutional outcomes: retention, graduation, and (remunerative) job placement. Capitalizing on that promise requires access to that data, and on this front, Raj Chetty’s work is a watershed. We use it to inform our partner selection—identifying schools that reliably and affordably serve poor students—and to power our underwriting model.”

- One of the most promising developments in higher education this year was the proposed College Transparency Act—about the bill as originally conceived; a more recent piece describes some changes.

- Do we need more universities? Lyman Stone analyzes the economic role of the US higher education system: “The problems we face are: (1) the regional returns to higher education are too localized, (2) the price of higher education is bid up very high, (3) the traditional entrepreneurial player, state governments, is financially strained or unwilling, (4) private entrance is systematically suppressed by unavoidable market features.” Link. Stone was responding to a Douthat column called “Break Up the Liberal City”; Noah Smith also weighed in. See also this 2011 paper from the New York Fed, “The Role of Colleges and Universities in Building Local Human Capital.”

UNIVERSAL BASIC INCOME

New pilots and analysis

Three important basic income initiatives launched in the United States in 2017: Stockton, Oakland, and Hawaii. In the broader basic income discussion, alternative versions drew increasing attention.

The Economic Security Project has announced a partnership with Stockton Mayor Michael Tubbs to build a UBI pilot. Link to the official announcement. For a recent, comprehensive literature review of existing UBI studies, see this report from the Roosevelt Institute. Coverage of the Stockton project: Vox, the Atlantic.

Y Combinator outlines its upcoming large-scale randomized controlled trial: “We tentatively plan to randomly select 3,000 individuals across two US states to participate in the study: 1,000 will receive $1,000 per month for up to 5 years, and 2,000 will receive $50 per month and serve as a control group for comparison.” Link.

In July, Hawaii announced it would take the first steps towards instituting a basic income guarantee. Light context and explanation: FastCo, Vox. Here is the bill text, which cites automation and widening inequality as motivations for investigating UBI, and Hawaii State Representative Chris Lee’s Reddit announcement of the bill, in which he gives thanks to r/BasicIncome and r/Futurology for their inspiration.

The public discussion is becoming more sophisticated, including critiques of UBI as well as alternative forms:

- Roosevelt Institute study on how to pay for UBI: “Our results are very clear: enacting a UBI and paying for it by increasing the federal debt would be expansionary, because it would increase aggregate demand.” Link. Dylan Matthews’s commentary enumerates the assumptions of the authors.

- Nicolas Colin’s critique of the idea brings history and French policy to bear on the question. “Basic income is to the social state what the flat tax is to the tax system. It flatters the engineering mind with its apparent simplicity. But in fact it is impossible to implement; it’s also politically suicidal; nobody’s ready to die for it; and even if it existed, it would probably trigger extraordinary political tension and the highest level of inequality in modern Western history.” Full article here. The piece also contains a multitude of interesting links to other pieces on topics like benefit portability and the “submerged state.”

Michael comments: The headline,“Enough With This Basic Income Bullshit,” is overblown, but nonetheless, an excellent article—Colin makes a convincing case for a much more sophisticated understanding of into which possible economies UBI best fits (and the need to understand the history and political economy of the welfare state before trying to design a quick fix).

- Matt Bruenig on the Finnish not-actually-universal Basic Income: “But Finland’s experiment has its dark sides as well, especially for those who are concerned that a poorly designed UBI could undercut the welfare state without truly liberating anyone.” Link.

- Social wealth funds/sovereign wealth funds are also part of the conversation. Matt Bruenig again wrote this much-discussed New York Times op-ed: “There’s a tried and tested way, within the system we have now, of giving everyone a share in the investment returns now hoarded by the wealthy. It’s called a social wealth fund, a pool of investment assets in some ways like the giant index or mutual funds already popular with retirement savings accounts or pension funds, but one owned collectively by society as a whole.” Noah Smith responds; Matt Stoller responds. See also Roger Farmer’s 2016 book Prosperity for All, and related Vox EU piece from 2009.

ALGORITHMIC DECISION-MAKING

Fake news and recommender systems, the ethics of algorithms, XAI

As social awareness of the pervasiveness of machine learning decision tools grows, so too do the social and political questions about them – around everything from the reliability of fake news detection AIs to potential bias in bail-eligibility algorithms.

On fake news:

This article describes new attempts to use AI to spot fake news: “AdVerif.ai isn’t the only startup that sees an opportunity in providing an AI-powered truth serum for online companies. Cybersecurity firms in particular have been quick to add bot- and fake news-spotting operations to their repertoire, pointing out how similar a lot of the methods look to hacking. Facebook is tweaking its algorithms to deemphasize fake news in its newsfeeds, and Google partnered with a fact-checking site—so far with uneven results. The Fake News Challenge, a competition run by volunteers in the AI community, launched at the end of last year with the goal of encouraging the development of tools that could help combat bad-faith reporting.”

- Here’s more on the Fake News Challenge; an automated fact-checking startup; and Gobo from the MIT Media Lab (“Take control of your feed”).

- On Youtube’s controversial kids’ videos: “These videos, wherever they are made, however they come to be made, and whatever their conscious intention (i.e. to accumulate ad revenue) are feeding upon a system which was consciously intended to show videos to children for profit. The unconsciously-generated, emergent outcomes of that are all over the place.” Link to James Bridle’s post. A piece on the same topic in the New York Times. From the Verge, after the two pieces above were published: “YouTube says it will crack down on bizarre videos targeting children.” Link.

- A 2014 Slate Star Codex piece on similar themes: “Every single citizen hates the system, but for lack of a good coordination mechanism it endures. From a god’s-eye-view, we can optimize the system to ‘everyone agrees to stop doing this at once’, but no one within the system is able to effect the transition without great risk to themselves.” Link.

On questions of justice and fairness:

Key notions of fairness contradict each other—something of an Arrow’s Theorem for criminal justice applications of machine learning.

“Recent discussion in the public sphere about algorithmic classification has involved tension between competing notions of what it means for a probabilistic classification to be fair to different groups. We formalize three fairness conditions that lie at the heart of these debates, and we prove that except in highly constrained special cases, there is no method that can satisfy these three conditions simultaneously.”

Full paper from Kleinberg, Mullainathan and Raghavan here.

- In a Twitter thread, Arvind Narayanan describes the issue in more casual terms: “Today in Fairness in Machine Learning class: a comparison of 21 (!) definitions of bias and fairness…”

- A critique of this ProPublica story on the COMPAS algorithm used for criminal sentencing: the issue with the paper comes down to competing definitions of fairness. Link.

- The AI Now Institute is a leader in this space; coverage of the initiative in the MIT Technology Review. DeepMind is also devoting some attention to these issues with its new “Ethics & Society” research unit. Wired notes interest in the topic at the NIPS conference earlier this month: “Alongside the usual cutting-edge research, panel discussions, and socializing: concern about AI’s power.”

On explanation and AI:

“The disconnect between how we make decisions and how machines make them, and the fact that machines are making more and more decisions for us, has birthed a new push for transparency and a field of research called explainable A.I., or X.A.I. Its goal is to make machines able to account for the things they learn, in ways that we can understand.” An overview piece in the Times here.

Jay comments: Many authors (including the Harvard group) demand that the process of explanation be determinate and consistent across contexts. Assume that it is. If an algorithm lends itself to rational reconstruction, it seems that the features to which the algorithm responds must be to some extent recognizable to humans, and the decision process by which the algorithm uses them to classify must be to some extent expressible to humans. But this presents a dichotomy. If the features perfectly reflect human-recognizable features, and the process is easily describable, this makes the algorithm look very like a decision tree or similar. For most of the tasks in question, these perform poorly. On the other hand, if the interpretations are themselves only vague or rough analogies, then it’s not clear how reliable such explanations are: our rational reconstructions seem more creation than recreation. There’s a trade-off between how well the algorithms work, and how well they can be explained. The real question, it seems, is how to balance this trade-off.

Jay on further reading: At least to the extent that successful development of XAI depends upon a coherent picture of explanation, those working in the field would do better by learning from the work that’s gone before, rather than trying to re-discover everything from scratch… Michael Strevens, Elliott Sober, Philip Kitcher, Bas van Fraassen, Brad Skow, and Robert Batterman are some of the key figures.

JFI STAFF FAVORITES OF 2017

On altruistic punishment and modeling cooperation:

“In anonymous experiments intended to mimic collective action situations, strong reciprocators tend to punish free-riders, even when they are not the direct victims, and even when there is no clear or assured benefit in the future from doing so. ‘Strong reciprocity’ could be the psychological basis of the outrage that one sees in reaction to a social norm violation like, say, queue-jumping.

…“The standard solution is for the state — a third-party enforcer — to punish the louts and ensure compliance with the rules of the game. But the state has what’s called in political economy parlance a ‘commitment problem’: if it’s strong enough to enforce the rules of the game, then it’s also strong enough to manipulate them in its own interests, or in the interests of the powerful which control the state. So why on earth would the state act altruistically to solve the coordination problem in the public interest? Many issues of governance — such as corruption or patronage politics — in one way or another, boil down to the inability of a population to coordinate on an agreed-upon set of rules, because there exists no disinterested nth-order enforcer.

“Yet, despite public choice theory, despite capture by special interests, we know that the state in well-functioning societies more or less acts in the public interest most of the time. In other words, somehow collective action problems get solved; somehow socially productive cooperation happens.”

Full post on PSEUDOERASMUS here.

BENJAMIN ALLEN writes in Aeon about the method, result, and use of a simplified mathematical model of society:

“An earlier study had examined a simple case of this model, in which each individual has the same number of neighbors. They found that, for cooperation to flourish, the benefit-cost ratio of cooperation must be greater than the number of neighbors per individual. For example, if everyone has exactly five neighbors, cooperation succeeds if it provides at least five times as much benefit as the cost a cooperator pays. But while this is a beautiful result, its applicability is limited: in typical real-world networks, individuals differ widely in their number of neighbors, with some having a great many neighbors and others having very few.

“We found a way to calculate whether cooperation is favored on any network. The key quantity is the critical benefit-cost ratio. If this ratio is three, for example, then any cooperative behavior providing a threefold-or-greater benefit is favored. We showed how to calculate the critical cost-benefit ratio of any given network by solving a system of linear equations (a mathematically straightforward task). The smaller this ratio, the easier cooperation is to achieve. But for some networks, this ratio is negative, which means that cooperation is never favored to evolve.”

- Steve Randy Waldman with more: “Altruistic punishment is essential to human affairs but it is hard. It is mixed, it is complicated, it is shades of gray. It is punishment first and foremost, andpunishment hurts people, that’s its point. Altruistic punishment hurts the punisher too, that’s why it’s ‘altruistic’. It can’t be evaluated from the perspective of winners or losers within a direct and local context. It is a form of prosocial sacrifice, like fighting and dying in a war.” Link.

On revolutions:

“Revolutions, The Great Leveler explains, act a lot like wars when it comes to redistribution: they equalize access to resources only insofar as they involve violence. The communist revolutions that rocked Russia in 1917 and China beginning in 1945 were extremely bloody events. In just a few years, the revolutionaries eliminated private ownership of land, nationalized nearly all businesses, and destroyed the elite through mass deportations, imprisonment, and executions. All of this substantially leveled wealth. The same cannot be said for relatively bloodless revolutions, which had much smaller economic effects. For example, although the Mexican Revolution, which began in 1910, did lead to the reallocation of some land, the process was spread across six decades, and the parcels handed out were generally poor in quality. The revolutionaries were too nonviolent to destroy the elite, who regrouped quickly and managed to water down the ensuing reforms. In the absence of mass violence concentrated in a short period of time, Scheidel infers, it is impossible to meaningfully redistribute wealth or substantially equalize economic opportunity.”

TIMUR KURAN finds more reasons for optimism than Scheidel does, noting that Scheidel’s book “focuses on inequality within nations, paying little attention to inequality among nations…global inequality has lessened dramatically since World War II.” Full piece in Foreign Affairs here.

- The Economist’s take: “Scheidel follows Max Weber, one of the founders of sociology, in seeing democracy as a price elites pay for the co-operation of the non-aristocratic classes in mass warfare, during which it legitimises deep economic levelling.”

On airlines and our disintegrated polity:

“Building transportation infrastructure provides and encourages economic development within far-flung communities, reducing the geographic disparities that now threaten the viability of the United States as an integrated polity. But transportation infrastructure also very directly binds distant parts of the polity together, and reduces the likelihood that dangerous disparity will develop or endure. If Cincinnati has abundant and cheap air transportation capacity that will remain whether it is fully utilized or not, firms in New York and DC and San Francisco will start thinking about how they can take advantage of the lower costs of those regions in a context of virtual geographic proximity. When decisions about transportation capacity are left to private markets, a winner-take-all dynamic takes hold that is understandable and reasonable from a business perspective, but is contrary to the national interest.”

STEVE RANDY WALDMAN explains how essential infrastructure integration is to preventing geographic inequality. Full post here.

- Steve links to Matt Stoller on airline regulation and Phillip Longman on regional inequality.

On cost disease:

“So, to summarize: in the past fifty years, education costs have doubled, college costs have dectupled, health insurance costs have dectupled, subway costs have at least dectupled, and housing costs have increased by about fifty percent. US health care costs about four times as much as equivalent health care in other First World countries; US subways cost about eight times as much as equivalent subways in other First World countries. I worry that people don’t appreciate how weird this is. I didn’t appreciate it for a long time.”

SCOTT ALEXANDER’s post is an armchair-analysis masterpiece (casual, curious, wide-ranging). Full post here.

From Random Critical Analysis, see a detailed post titled “High US healthcare spending is quite well explained by its high material standard of living”:

“Total per capita health care spending increases as wealth increases because people actually demand more goods and services (volume) per capita and because it is relatively labor intensive sector that does not enjoy the productivity gains found in some other sectors of the economy, i.e., overall costs increase through both volume and price together (volume * price). GDP per capita is a relatively weak measure for these purposes and those few other high GDP countries happen to be much more export dependent (which does not independently predict significant increases in expenditures). If you use a better measure like Actual Individual Consumption (AIC) or run multiple regression analysis on GDP expenditure categories most of the apparent excess health care spending shrinks quite dramatically.”

Full post here. And here’s a related post, “Towards a general factor of consumption,” with more details on AIC.

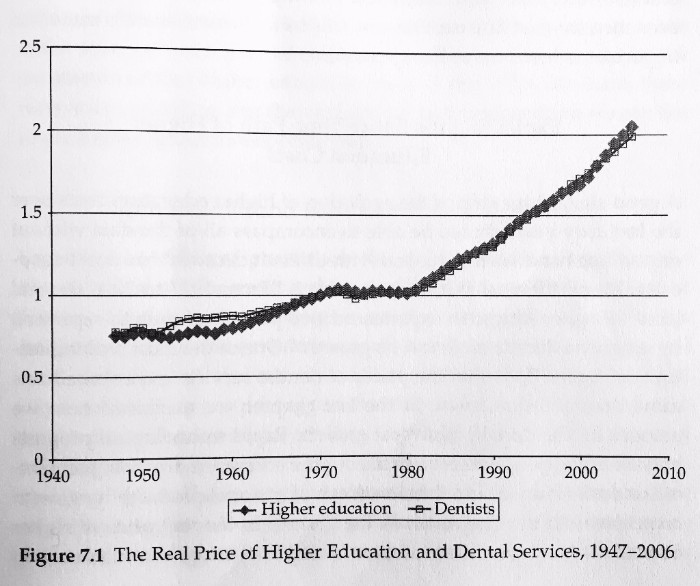

- Chart below from Why does college cost so much?, by Archibald/Feldman. Michael comments:Multiple of price in real terms on the y-axis (70s dollars). In case there was any question as to whether cost disease is a cross-sector phenomenon.

Filed Under

Each week we highlight research from a graduate student, postdoc, or early-career professor. Send us recommendations: editorial@jainfamilyinstitute.org