Since the late 1970s, cutting edge semiconductors have figured at the heart of the political economy of the United States. Often called the “crude oil of the information age,” they have become increasingly ubiquitous and are now considered the basic building blocks of a broad swath of industry, including telecommunications, automobiles, and military systems. By the 1980s, semiconductors had become so significant to the economy that they came to be seen as symbolic of American power itself, and the essential element in the country’s post-Cold War future. In 2003, a National Academies of Science Report could refer to the pervasiveness of semiconductors as “the premier general-purpose technology of our post-industrial era. In its impact, the semiconductor is in many ways analogous to the steam engine of the first industrial revolution.”1

Historically, the success of the US semiconductor industry was built on a collaboration between state and capital. When Japan threatened to dominate the field in the 1980s, US policymakers worked to ensure US dominance. In recent years, however, interventionist policy has fallen out of favor, and American chipmakers like Intel now find themselves lagging behind competitors in Taiwan and Korea. Following the panic that surrounded pandemic supply-chain shortages, semiconductors have returned to their earlier status as a litmus test for American power and decline. President Joe Biden’s Executive Orders, as well as the CHIPS Act of last year, signify an effort on the part of the government to “make America(n industry) great again.” In that sense, these latest moves are something of a return to earlier policies which foregrounded intervention, rather than a declaration of “world war,” as some have suggested. Whether or not they will have the desired effect remains to be seen, as the money earmarked for production in the CHIPS Act falls short of the capital investment required to truly revive the industry.

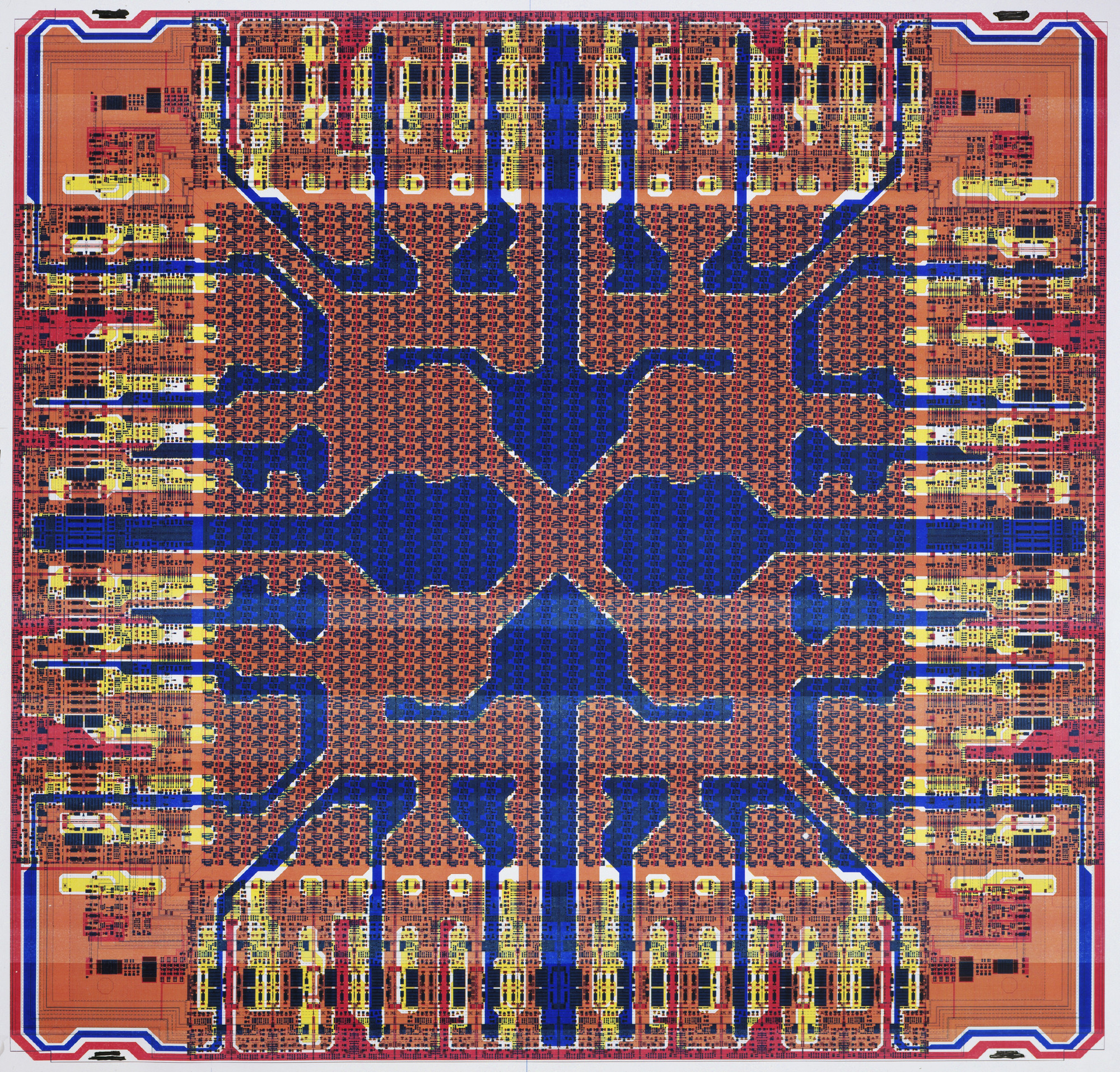

The rise of Intel

The story of America’s post-Cold War dominance in semiconductors is in many ways the story of Intel. Incorporated in Mountain View, California in 1968 by Fairchild employees Gordon Moore and Robert Noyce, the company focused on semiconductor production, and by the 1980s had become one of the top ten producers in the world. It was in 1965 that Gordon Moore made his prediction that chips would get smaller, cheaper, and faster at regular intervals. Canny public relations campaigners for Intel turned this into “Moore’s Law,” and by the 1990s it was widely touted as the natural cause of the company’s remarkable success.

Intel was formed when metal-oxide semiconductors—a marked improvement on the existing technology—were in prototype but hadn’t yet been widely produced. This good timing allowed Intel to reap the benefits from the boom it was about to generate. The company benefited from other strokes of luck in its early years. Importantly, IBM chose Intel to supply chips for its answer to the Apple PC in 1981. Like many American semiconductor companies, Intel was threatened by Japan’s growing dominance in the field, but it made the quick and advantageous decision in 1985 to pull out of manufacturing memory chips (DRAMs)—stemming losses (Intel was losing $1 per chip). In the 1980s, US tax laws allowed domestic companies to write off the construction of new factories; chip capability doubled every two years, and profits surged. As competition with Japan ramped up, Intel decided to speed up its product development cycle still further; its chip capability was increasing at a dizzying speed—quadrupling every three years. The stunt worked to pressure Japanese competitors to do the same to keep up, but whereas Intel planned to wind down the breakneck speed after a couple of years and return to a profitable rate of production, Japanese industry had permanently altered its production model to keep up with the new pace. This made it increasingly difficult for Japanese companies to turn a profit, and so when combined with Japanese economic woes in the early 1990s and diplomatic pressure from the US government, the industry faltered. The US, and Intel in particular, reclaimed the lead. US industrial policy, favorable regulatory changes, the advantages conferred on Intel via the international roadmap, and the US government’s tolerance of Intel’s extremely close relationship to Microsoft (known at the time as Win-Tel) allowed the firm to maintain its dominance through the 2000s.

Success in the 1990s planted the seeds for future problems. Former Intel executive Paolo Gargini noted that Intel’s triumph in 1994 took the pressure off the government to maintain its rate of investment.2 As a result, by the early 2000s, US industry lacked resources and at the same time required massive investments to make the conversion to a new chip design that could continue Moore’s Law improvements. The US industry was forced to go beyond American shores. Because of his involvement with the US National semiconductor roadmap, among other things, Gargini was tapped to organize the rest of the US industry to support this shift. Likewise, because of his many trips to Japan on behalf of Intel during the Japanese panic, he was tasked with organizing Japanese industry figures into new international institutions. The US–Japan conflict cast a long shadow; Gargini claims that the memory of the conflict made it difficult to convince US semiconductor figures to join international institutions, such as the World Semiconductor Council and the International Semiconductor Roadmap. Nonetheless, he managed to convince US producers that “collaboration” was the way forward. But Sematech, the industry research consortium developed in the 1980s, was unwilling to test new chip prototypes. This led US producers to develop IMEC in Belgium, further removing pieces of the production process from US shores.

The new international roadmap preserved Intel’s lead until the 2010s by coordinating the international division of labor. In addition to maintaining the US lead in high-end chips, the roadmap also provided incentives for other international stakeholders. The conversion to a new chip design which precipitated this international turn gave figures like Gargini the opportunity to advocate for more government support. He found success, gaining political support for foreign negotiations to set up these international institutions. He also managed to secure more funding—and with it, industrial planning and other government resources—which was distributed through several government agencies.

Losing the edge

By 2016, however, Intel had lost its lead in cutting-edge manufacturing. Enormous transfers of technology had taken place since the nineties—in broad daylight and without causing any alarm. The most significant of these transfers were to the Dutch ASML, which is the only company that produces the EUV lithography equipment necessary to make the best extant chips,3 and to the Taiwanese Semiconductor Manufacturing Co. (TSMC), which produces the largest proportion of cutting-edge chips globally. It was common practice in the semiconductor industry to sell older technologies a few generations behind to other foundries once those chips depreciated in price. While these technology transfers raised few eyebrows at the time, they paved the way for the loss of American dominance.

By the early 2000s, it became apparent across the industry that new manufacturing equipment was necessary to keep up with the expectations set by Moore’s Law. Beginning in the late 2000s, the transition to 300mm high-k metal gate chips required all-new and extremely expensive factories. Many of the US companies who decided not to convert to 300mm had products that relied on Taiwanese foundries. When they realized these foundries did not have the technology, they sent their development teams to Taiwan to upgrade their tech.4 This brought Taiwanese companies much closer to the leading edge. Realizing Taiwanese foundries would become major customers, suppliers gave them additional technological knowledge that they had gathered from other leading companies. At the same time, there was also an exodus of Taiwanese Intel engineers back to Taiwan between 2006 and 2008 after Intel closed its second research facility in Santa Clara. These engineers were in their mid-to-late 40s and went to TSMC with expertise in Intel’s technology.

Meanwhile, the growing success of Apple helped propel TSMC ahead of Intel. Apple had offered a contract to Intel to provide chips for their computers in around 2006, but Intel declined because they thought they would not make enough money per chip. TSMC got the contract instead, and as demand for Apple products skyrocketed both companies profited. By 2012, the design-manufacturing model pioneered by Apple had significantly transformed the electronics industry. Intel continued to specialize in expensive leading chips, but it received little government investment and research and development (R&D) budgets dwindled. Confidence in US corporate dominance nonetheless remained high because of previous successes, but by 2016, when the next generation of EUV lithography was rolled out, Intel found itself significantly behind its competitors. Today, Intel lags behind its competitors and looks unlikely to catch up any time soon. Several commentators have noted that the company’s financial future, despite the CHIPS Act and other subsidies, looks bleak.

Government and industry

Even though it became clear that American dominance in the industry was waning in the 2010s, the government chose not to step in to protect Intel, as it did for semiconductor firms in the 1980s when Japanese companies were taking the lead. As a result, the government broke with decades of policy precedent which had consistently buoyed the American chip industry. The government similarly could have addressed the risks incurred to the reliable supply of cutting edge chips by the increasing monopolization of the industry by a few players, but failed to do so as well. Examining the history of the semiconductor industry and the US state helps explain American hubris.

Since the early Cold War, semiconductors have figured at the heart of US defense strategy, giving the US the technological edge over the Soviet Union’s manpower advantage. (This is known as the “Offset Strategy.”) Likewise, US hubris in Vietnam was driven in part by exaggerated claims about US technology’s ability to deliver precision command and control. This vision was partially realized later with Operation Desert Storm where more advanced electronics allowed the US military to bomb military targets with more precision.5 In the late 1970s, with the rise of civilian computing, the industry experienced major structural shifts. Civilians, not the military, became the main consumers of chips, which caused larger companies such as Bell Labs —which consisted of major research arms and subsisted on large government contracts—to give way to smaller start-ups focusing on the civilian market. These smaller companies developed a close relationship with the US government when they began facing fierce international competition in the late 1970s and early 1980s. They needed the federal government not mainly as a source of contracts but, more fundamentally, to negotiate with foreign governments, secure favorable trade deals, provide subsidies, relax anti-trust and other major regulations, and help centrally plan the industry and its suppliers.

Aggressive trade and diplomatic pressure characterized US strategy on semiconductors in the 1980s and 1990s. The US government threatened and at points even applied sanctions on Japanese firms in an effort to ensure American dominance.6 The Reagan administration used sanctions and other threats to force Japan to cede 20 percent of the Japanese domestic market to foreign—namely US—chipmakers. In fact, US intervention on behalf of the semiconductor industry inaugurated an entirely new approach to trade policy, which infuriated right-wing think-tanks like Heritage at the time.8 By the mid-1990s, Democrats and Republicans found common ground in using the success of the tech industry to promote right-wing narratives about the triumph of free markets, American exceptionalism, and the brilliance of US entrepreneurs, while the agencies conducting industrial policy for the “tech” sector were protected from neoliberal demands to shrink the state

Influenced by the political strategy of Atari Democrats, the Clinton administration of the 1990s embraced the tech industry with particular vehemence, believing it would help them resolve the contradictions between “fiscal responsibility” on the one hand, and employment demands from its working-class constituents on the other. This was in part thanks to the rise of new growth theory within economics and industrial policy proponents like Robert Reich and Joseph Stiglitz who foregrounded tech as essential for economic growth. They also believed that the tech industry uniquely accrued “natural” monopolies and there was no way around it. In the late 1990s, the US built a series of new post-Cold war international institutions like the World Trade Organization (WTO) and the World Semiconductor Council that significantly favored US semiconductor and other information industries in the interest of preserving US hegemony. Similarly, the new international roadmap coordinated firms, suppliers and other participants in the industry, creating “cooperation” among sector participants, with strict roles and hierarchies. The cooperation necessary to build and maintain the international roadmap was predicated on US firms’ dominance and encouraged industry consolidation.

Active government involvement in industry began to change under George W. Bush. Donald Rumsfeld had always wanted to hand tech policy to the Department of Defense and had tried to do so under Ford. During the Bush administration, the security state had a close relationship with the industry but held little direct control or management. Obama maintained this close relationship to Silicon Valley following the Democrat’s pro-tech policies under Clinton. Crucially, by the mid-2000s, companies like Apple, Google, and Facebook needed the cheap chips manufactured by TSMC to succeed. For companies which focused on design, this was a good deal; it meant that they didn’t have to spend money on staggeringly expensive new 300mm factories (today, a typical fab costs around $4 billion while cutting edge fabs can cost $10 to 20 billion or more). This arrangement allowed smaller chip design start-ups to survive. It also benefited consumers in the short term—another justification for the government’s decision against intervention. The US political class was not concerned about losing the cutting edge in chip manufacturing until it was too late.

It wasn’t until 2016 that the defense world began to worry about Intel and access to cutting-edge chips. Four years later, the onset of the Covid-related supply-chain issues concerning generic chips led US politicians to pay attention to semiconductors as well. The White House claims that the chips shortage cost the US a full percentage point of economic output. Because of the lack of technical knowledge in Congress (stemming from reforms like Gingrich’s elimination of the Office of Technical Assessment), current policymakers conflated access to lower-end chips with Intel’s failures to stay ahead of their competition. From their point of view, the drop in US production explained the supply chain shortages. It’s this conflation that produced the CHIPS Act and Biden’s executive orders, as well as the renewed political pressure to increase both generic and leading-edge domestic chip production.

Present state of play

The Biden administration’s new executive orders limit Chinese access to semiconductors and semiconductor manufacturing equipment (including “human capital”). There’s reason to believe the orders are more rhetoric than substance. They are difficult to enforce, requiring both the cooperation of other countries and control of the black and grey markets. They also do little to blunt China’s ability to advance technologically—in terms of artificial intelligence applications, there are workarounds. For example, NVIDIA, a major AI chipmaker, claimed in their business report from October that they will sell China slower dies but make up for it with a faster network. Moreover, the executive orders do not address the underlying problems with US industry.

Majority Leader Chuck Schumer’s statement on competition with China might as well have been lifted from the 1980s—replacing only “China” for “Japan.” He declared that “the Senate will work in several ways to further strengthen America’s position against China’s exploitative economic and industrial policies that aim to undermine American microchip manufacturing and other innovations, endangering our economic and national security.” Some on the left have expressed concern that these executive orders will strangle Chinese “technological progress,” but this is also an overstatement. Despite having spent more than three times the amount designated in the CHIPS Act, China produces no leading-edge chips and is in a period of political turmoil and economic downturn, stemming from both Covid-19 restrictions and real estate market collapse. Much like Japan in the early 1990s, China is struggling to make a serious effort in chip manufacturing, with little chance of catching up to the much more costly leading-edge. Bloomberg recently reported that the Chinese government is “pausing” investment in semiconductors. Still, the industry could find some workaround. Unlike Russia, for example, China can buy from Europe. The US will likely try to block these attempts, but as John Bateman notes, “I question whether that’s a sustainable strategy for Washington to continually drag its allies kicking and screaming.”9

The dominance and evolution of the US chip industry has historically relied on both hot and cold conflicts, like those in Korea, Vietnam, Desert Storm, and the “war on terror.” Wars prompted semiconductor improvements and provided places to test technologies. After the official end of the war on terror, the government now needs a new emergency to motivate state investment legible to the public. The major long-term concern for the US security state is that cutting-edge chips are no longer produced by US firms—they are produced by TSMC in Taiwan. This fact puts US security state interests into conflict with China, because China claims Taiwan as its own territory. A recent statement by US representative Seth Moulton makes this clear, “If you invade Taiwan, we will blow up TSMC (Taiwan Semiconductors).”10

Can the US make its own cutting-edge chips? The efforts by US politicians to bring leading-edge chips back to the US are notable but inadequate. Chip production is an extremely capital-intensive industry; new fabs cost between $4 and $20 billion to make. The CHIPS Act commits a one-time payment of $52 billion, but only $39 billion is earmarked for “manufacturing incentives” while $11 billion is allocated for R&D. The CHIPS Act has two main goals: to revitalize the domestic semiconductor industry and to bring supply chains back to the US.

The CHIPS Act aims to produce at least “two new large-scale clusters of leading-edge logic fabs…owned and operated by one or more companies.” Other targets for US production include advanced packaging, leading-edge memory chips, and generic chips. The Act promotes the production of economically competitive leading-edge memory chips and the R&D for future leading memory chips in the US, as well as increased capacity for producing standard chips. These are great ambitions for $39 billion, and the vision document admits this sum will not be sufficient. Implicitly at stake the US relationship to domestic and foreign producers. Will there be an attempt to push Intel ahead of TSMC or will the US double down on working with foreign leading edge companies but encourage them to manufacture in the US? Or will we see a combination of the two?11 The future of industrial policy in the US may hang in the balance. Proponents of industrial policy have expressed worry that CHIPS’ failure to achieve its stated goal could prevent future investments.12

There are also a number of implementation issues. The political pressure exerted by the US government through semiconductor customers like Apple as well as car companies like GM will temporarily compel TSMC and Samsung to bring higher-end chips to the US. Production in the US is at least twice as costly as in Taiwan and China. While it remains to be seen who will bear those costs, companies like Apple may well pass them onto consumers. State governments have offered these foreign companies significant subsidies, in the form of tax breaks, land, and other amenities, but the federal government has yet to spend a dime (though meetings on expenditure are ongoing). Without sustained investment, the outcome could repeat the pitfalls of the late 1990s, when Intel temporarily manufactured chips in Ireland because the EU threatened to prefer other companies and offer Intel subsidies. When political pressure subsided, Intel relocated to cheaper production locales. Today, competitors have warned that Intel similarly might take federal money and build only “shells” of factories.

Despite talk of China’s threat to TSMC, Dutch company ASML’s monopoly is arguably more significant for US security concerns around industry concentration. If TSMC imploded, Samsung and Intel could purchase equipment from ASML (though of course, there would be chaos for a few years as the industry adapted). But ASML does not have a substitution—there are no other equipment manufacturers in the world with expertise in EUV lithography to replace it. The US government has significant leverage in the semiconductor ecosystem, and it could pressure ASML to manufacture in the US or distribute EUV manufacturing knowledge. This is a matter of political will.

Despite public investment, the semiconductor industry is plagued by low margins, high costs, and volatile business cycles. Today, Intel is the only US company that can produce leading edge chips, and it is already heavily subsidized. But there is an alternative to resuscitating Intel’s leading edge business or relying on foreign firms: the US government could run a nationally-owned fab. While a national factory would be costly, the US already funds and maintains access to necessary technologies. A nationally-owned fab could produce the volume needed to accurately error test chips by supplying the civilian market, reducing government aid to US companies and stabilizing businesses and consumers that rely on such fabs. Adding a national player to the chipmaking industry might be politically complex, but it would be a strategic and effective response to the overlapping challenges in the current infrastructure. Most importantly, a nationally-owned fab would ease tensions with China around US access to cutting-edge chips, helping avert a global crisis in the making.

National Academies of Sciences, Engineering, and Medicine. 2003. Securing the Future: Regional and National Programs to Support the Semiconductor Industry. Washington, DC: The National Academies Press.

↩Author interview with Paolo Gargini February 18th, 2022.

↩The transfer of EUV lithography technology (the technology to produce the current generation of leading chips) to ASML happened largely because they were the only company willing to make long-term investments in developing the technology after the US government pulled funding and Intel realized they couldn’t fund development alone long-term.

↩This made some US semiconductor researchers who had been working for these companies understandably upset; they had been working on this technology for years and were told they had to teach it to their Taiwanese replacements for free, after years of fierce competition.

↩The success of Desert Storm gave US leaders an outsized sense of what information technology could offer which has contributed to their deployment of drones despite regular errors that lead to significant civilian death.

↩They also made sure Japanese companies got big margins in the short term.

↩- ↩

Laura Tyson and David Yoffe, writing in 1991, claim “It was the first major US trade agreement in a high-technology, strategic industry and the first motivated by the loss of high-tech competitiveness rather than concerns about employment. It was the first US trade agreement dedicated to improving market access abroad rather than restricting market access at home. Likewise, they write “[u]nlike previous bilateral trade deals, it attempted to regulate trade not only in the US and Japan but in other global markets. It was the first time the US government threatened trade sanctions on Japan for failure to comply with the terms of a trade agreement.” (from “Semiconductors: From Manipulated to Managed Trade”).

Beginning in the 1980s, the federal government also offered the services of several federal agencies, including the National Institute for Standards and Technology (NIST) and the National Labs, which provided important infrastructure for semiconductor research, development, and testing, and helped direct the course of academic research. The industry became particularly reliant on NIST—a fact that explains its extensive lobbying on NIST’s behalf when, in the mid-1990s, the Gingrich House attempted to eliminate it. By relaxing regulations, lowering taxes, and creating infrastructure for these entities, the federal government moreover helped create a new funding ecosystem around high tech which was highly lucrative for private participants; most of the real risk was shouldered by federal agencies, who in turn were given influence over the system as a whole.7For example, some agencies in the 2000s formed VC firms and through those investments got seats on the boards of new tech companies.

↩According to this new WSJ article, the Biden administration has significantly walked this back.

↩In an aside, he added “… of course Taiwanese really don’t like this idea…”

↩This quotation from Gina Raimondo seems to suggest they are leaning toward the non-Intel options: “‘We have very clear national security goals, which we must achieve,’ Ms. Raimondo said, noting that not every chip maker will get what it wants. ‘I suspect there will be many disappointed companies who feel that they should have a certain amount of money, and the reality is the return on our investment here is the achievement of our national security goal. Period.’”

↩This, despite much clearer success in solar, wind, and EV manufacturing.

↩

Filed Under